How will teachers initially identify struggling readers?

Page 2: Universal Screening Components

Universal screening is the administration of an assessment to all students in the classroom. The purpose of this assessment is to determine which students may be struggling with reading skills. Schools have several options related to how they conduct universal screening. These decisions are usually made at the school or district level and will greatly reflect the needs of the students and the resources available. These decisions relate to the following factors:

Universal screening is the administration of an assessment to all students in the classroom. The purpose of this assessment is to determine which students may be struggling with reading skills. Schools have several options related to how they conduct universal screening. These decisions are usually made at the school or district level and will greatly reflect the needs of the students and the resources available. These decisions relate to the following factors:

- Frequency of the screening

- Selection of the screening measure

- Criteria used to determine which students are in need of intervention

Regardless of the school or district’s plan for implementing the universal screening process, the school’s resources need to be organized so that students who are struggling can be identified and can receive the services they need to be successful.

Frequency

The universal screening may be administered between one and three times per year. If administered once, it is conducted near the beginning of the school year. This allows the teacher to identify students who are struggling early in the academic calendar.

The universal screening may be administered between one and three times per year. If administered once, it is conducted near the beginning of the school year. This allows the teacher to identify students who are struggling early in the academic calendar.

Another option is to conduct the screenings several times per year. The purpose of the universal screening at each point in time is outlined in the table below.

| Time Administered | Purpose |

| Fall |

|

| Winter |

|

| Spring |

|

Measure

A number of assessments can be used for the purpose of universal screening, the particular details of which may be determined at the local or state level. Regardless of the assessment used, alternate versions are administered if multiple screenings are conducted across the year (i.e., fall, winter, spring). These assessments include, but are certainly not limited to, the following:

Alternate version

An alternate version of an assessment tests the same skills as a standard version does, but it uses different items. While the assessments are different, they are equivalent in the skills they assess. For example, when assessing first-grade reading, each alternate version will have different words, but all of them will be at the first-grade level. An alternate form has the same format as a standard form, but it features different content. This should not be confused with an “alternative format,” which may assess skills differently and/or utilize different content.

If a school system purchases a commercially available assessment product, it usually includes alternate forms. However, if a school system chooses to create its own assessment tool, it will need to create alternate forms so that each time the assessment is administered it contains slightly different content (e.g., different first-grade words, different passages at the same grade level).

- One-minute readings assessments such as:

- Curriculum-based measurement (CBM) probes (e.g., DIBELS, AIMSweb, Vanderbilt University)

- Dolch sight word lists

- Standardized reading assessment (e.g., Woodcock Reading Mastery Test-Revised [WRMT-R])

- Criterion-referenced or norm-referenced assessments (e.g., Yopp-Singer Test of Phoneme Segmentation, Comprehensive Test of Phonological Processing [CTOPP])

- The previous year’s standardized achievement test scores

It is important to keep in mind that the universal screening measure should be culturally valid for all students in the district or state.

Listen as Alfredo Artiles describes an example that illustrates why measures should be culturally valid (time: 1:15).

Alfredo Artiles, PhD

Professor, College of Education, Arizona State University

Co-Principal Investigator, National Center for Culturally

Responsive Educational Systems (NCCRESt)

Transcript: Alfredo Artiles, PhD

Back in the ’70s, there was a series of studies done in Africa on literacy and the impact of schooling on cognitive development. People were asked to classify different terms and constructs. The examiners were not necessarily getting from the respondents the kinds of answers they were expecting to assess cognitive development because the way they will address or tackle issues of classification was based on a completely different dimension or ways of defining categories. When they adapted those tests, and did it in a way that were more relevant to the daily practices of those individuals, then those respondents were able to show a highly sophisticated use of cognitive skills that made sense to their own routines and cultural practices. So, to what extent were they actually getting low scores, in the first place, as a result of how the very instrument was designed without paying attention to those cultural considerations? Those are just really subtle issues that we never pay attention to, and we just assume that we never have to question the assumptions embedded in the construction of the test.

Criteria

How to go about determining which students are experiencing reading difficulties will depend on the measure used. The criteria for two types of universal screening measures are described below:

- Criterion-referenced measure — When using a criterion-referenced measure, a benchmark is used to differentiate struggling readers. Benchmarks identify the expected skill levels for students at each grade. Most CBM probes have established benchmarks for performance level (e.g., the Vanderbilt University Word Identification Fluency [WIF] probe benchmark for students at the beginning of first grade is 15 words read correctly in 1 minute.)

- Norm-referenced measure — When using this type of measure, standard scores or percentile ranks can be used to differentiate struggling readers. These criteria compare students with peers across the country. The WRMT-R and standardized achievement test scores are examples of norm-referenced measures.

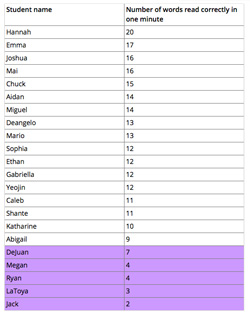

Regardless of the type of universal screening measure used, rank ordering can be used to identify the lowest performing students in a class (or in a grade level). A measure (which may be criterion-referenced, norm-referenced, or neither) is administered, and the students are rank-ordered according to their scores, highest to lowest. Those students with the lowest scores are identified as struggling readers. This may be done in several ways, depending on the available resources. The teacher can select a set number of students from among the lowest ranked (e.g., the bottom eight students), or the teacher can choose a certain percentage of the lowest performing students (e.g., the bottom 20 percent of the class).

It is important to note that different students may be identified depending on which criterion is used. This issue is explored in more detail in the activity on the following page.

Keep in Mind

As teachers begin to determine the standard they will use to identify students who may be struggling, they should take care to take two considerations into account:

- If the standard is set too high, students who do not need intervention may be identified and the school’s resources strained because too many students are receiving unnecessary services.

- On the other hand, if the standard is set too low, students who are struggling may not receive needed intervention.