IRIS & Adult Learning Theory

The How People Learn (HPL) theory is the theoretical framework upon which our STAR Legacy Modules are built. HPL is based on a problem or challenge-based approach to achieving a fuller understanding of instructional or classroom issues and challenges. This page offers a brief summary of the theory and its components. For a more in-depth examination of HPL, please view the IRIS Module How People Learn: Presenting the Learning Theory and Inquiry Cycle on Which the IRIS Modules Are Built.

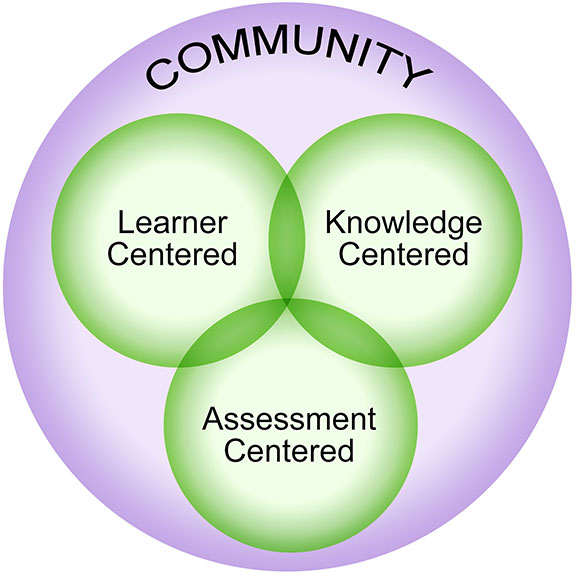

HPL offers classroom approaches that are different from the methods of instruction and assessment traditionally used in classrooms. Using the theory as their guide, John Bransford and his colleagues developed the HPL framework as a way to organize thinking about the design of effective learning environments.

Four Lenses

The HPL framework highlights four overlapping lenses that can be used to analyze and enhance any learning situation (Bransford, Brown, & Cocking, 1999). Harris, Bransford, and Brophy (2002) describe these four lenses:

Learner centeredness – Instruction is tailored, based on a consideration of learners’ prior knowledge as well as their previous experiences, misconceptions, and preconceptions.

Knowledge centeredness – Rigorous content is provided and students are helped to understand the material rather than simply to memorize it. This has implications for how instruction needs to be sequenced in order to support the comprehension and use of said knowledge in new situations.

Assessment centeredness – Frequent opportunities for monitoring students’ progress toward the learning goals are provided and the results fed back to instructors and learners.

Community centered – There is recognition that students are members of multiple communities (e.g., classroom, professional organizations) and that these communities offer opportunities for students and instructors to share and to learn from each other.

The STAR Legacy Cycle

Many instructors find it difficult to balance all four of the HPL lenses. For example, an instructor might successfully create a knowledge-centered learning environment but find creating a learner-centered one more challenging. At times, a sense of community might not be sufficiently promoted. Many environments also lack opportunities for frequent assessment and revision. In response to this difficulty, the STAR (Software Technology for Action and Reflection) Legacy model was designed to help introduce and balance the features of learner, knowledge, assessment, and community centeredness for instructional settings. This model uses an inquiry cycle that anchors learning, is easy to understand, and is pedagogically sound. The cycle is composed of five parts that have been repeatedly recognized in educational research as important, yet often implicit, components of learning (Schwartz et al., 1999). IRIS STAR Legacy Modules incorporate these five components, balancing the four HPL lenses.

Challenge – Modules are organized around case-based scenarios. Research shows that effective instruction often begins with an engaging scenario or challenge to introduce the lesson and invite student inquiry (Barron et al., 1998; CTGV, 1997; Duffy & Cunningham, 1996; NRC, 2000; Kolodner, 1997; Reiser et al., 2001; Williams, 1992).

Initial Thoughts – Students then generate their own ideas in order to explore what they currently know about the challenge. Discovering the extent of students’ prior knowledge and experiences regarding the problem or case-based scenarios––and building upon that knowledge––is a means through which to enhance learning. This can be particularly true for students from culturally diverse backgrounds, who often struggle to learn content in ways that are antithetical to their learning styles (Cobb, 2001).

Perspectives and Resources – Next, students access resources relevant to the challenge. These resources are presented as nuggets of information and may include text, interviews with experts, movies, and interactive activities. These resources often create “ah ha!” experiences when the students learn about points that they did not initially consider.

Wrap Up – The cycle continues with a summary and an opportunity for the student to review his or her Final Thoughts (which are the same questions asked in the Initial Thoughts section of the module). Learning is considered to have occurred when there is disparity between initial and final thoughts, with greater disparity indicating greater learning (e.g., Bransford, 1979; Schwartz & Bransford, 1998).

Assessment – Students eventually receive assessment opportunities to apply what they know, with the opportunity to return to the Perspectives and Resources section if needed.

Research Findings

Research concerning the effectiveness of HPL and STAR Legacy has demonstrated positive outcomes in college classrooms. Roselli and Brophy (2003) found that in an undergraduate biomechanics course students rated modules quite positively in terms of effectively communicating key concepts and stimulating interest. In addition, systematic and structured observations of a sample of class sessions were compared for sections that relied on traditional taxonomy-based instruction versus the HPL strategy; the latter included more learner-centered, knowledge-centered, assessment-centered, and community-centered behaviors by both the instructor and students. Using a scale of 1 (low) to 5 (high), 69.7% of the students in the “HPL courses” rated communications effectiveness as a 4 or 5, compared to only 33.6% of students in the traditional courses. Fifty-seven percent of the students in the HPL course gave ratings of 4 or 5 for stimulating interest versus 26% of students in the traditional course. Both the course (53.1% vs. 23.7%) and the instructor (69.7% vs. 37.6%) were judged more favorably (i.e., received higher percentages of 4 and 5 ratings) by students in their final course evaluations.

References

Barron, B. J., Schwartz, D. L., Vye, N. J., Moore, A., Petrosino, A., Zech, L., Bransford, J. D., & CTGV. (1998). Doing with understanding: Lessons from research on problem solving and project based learning. Journal of Learning Sciences (3&4), 271–312.

Bransford, J. D. (1979). Human cognition: Learning, understanding, and remembering. Belmont, CA: Wadsworth.

Bransford, J. D., Brown, A. L., & Cocking, R. R. (Eds.). (1999). How people learn: Brain, mind, experience, and school. Washington, DC: National Academy Press.

Cobb, P. (2001). Supporting the improvement of learning and teaching in social and institutional context. In S.M. Carver & D. Klahr (Eds.), Cognition and instruction: Twenty-five years of progress. Mahway, NJ: Lawrence Erlbaum.

Cognition and Technology Group at Vanderbilt (CTGV). (1997). The Jasper Project: Lessons in curriculum, instruction, assessment, and professional development. Mahwah, NJ: Lawrence Erlbaum Associates.

Duffy, T. J., & Cunningham, D. (1996). Constructivism: Implications for the design and delivery of instruction. In D. H. Jonassen (Ed.), Handbook of Research for Educational Communications and Technology (pp. 170–198). New York: Macmillan.

Harris, T. R., Bransford, J. D., & Brophy, S. P. (2002). Roles for learning sciences and learning technologies in biomedical engineering education: A review of recent advances. Annual Review of Biomedical Engineering, 4, 29–48.

Kolodner, J. L. (1997). Educational implications of analogy: A view from case-based reasoning. American Psychologist, 52(1), 57–66.

Martin, T., Pierson, J., Rivale, S., Vye, N., Bransford, J., & Diller, K. R. (2007). The role of generating ideas in challenge-based instruction. In W. Aung, J. Moscinski, M. Da Graca Rasteiro, I. Rouse, B. Wagner, & P. Willmot (Eds.), World innovations in engineering education and research. Arlington, VA: iNEER.

Martin, T., Rivale, S. D., & Diller, K. R. (2007). Comparison of student learning in challenge-based and traditional instruction in biomedical engineering. Annals of Biomedical Engineering, 35(8), 1312–1323.

National Research Council (NRC). (2000). How people learn: Brain, mind, experience, and school (Expanded Edition). Committee on Developments in the Science of Learning. J. D. Bransford, A. L. Brown, & R. R. Cocking (Eds.), with additional material from the Committee on Learning, Research and Educational Practice. Commission on Behavior and Social Sciences and Education. Washington, DC: National Academy Press. [Online]. Available from http://www.nap.edu/html/howpeople1/.

O’Mahony, T. K., Vye, N. J., Bransford, J. D., Stevens, R., Stephens, R. D., Richey, M. C., Lin, K. Y., & Soleiman, M. K. (2012). A comparison of lecture-based and challenge-based learning in a workplace setting: Course designs, patterns of interactivity, and learning outcomes. The Journal of the Learning Sciences, 21, 182–206

Reiser, B. J., Tabak, I., Sandoval, W. A., Smith, B. K., Steinmuller, F., & Leone, A. J. (2001). BGuILE: Strategic and conceptual scaffolds for scientific inquiry in biology classrooms. In S. M. Carver & D. Klahr (Eds.), Cognition and instruction: Twenty-five years of progress (pp. 263–305). Mahwah, NJ: Lawrence Erlbaum Associates, Publishers.

Roselli, R. J., & Brophy, S. P. (2003). Redesigning a biomechanics course using challenge-based instruction. IEEE Engineering in Medicine and Biology, 22, 66–70.

Schwartz, D. L., & Bransford, J. D. (1998). A time for telling. Cognition & Instruction, 16, 475–522.

Schwartz, D. L., Brophy, S., Lin, X., & Bransford, J. D. (1999a). Flexibly adaptive instructional design: A case study from an educational psychology course. Educational Technology Research and Development.

Schwartz, D. L., Lin, X., Brophy, S., & Bransford, J. D. (1999b). Toward the development of flexibly adaptive instructional designs. In C. Reigeluth (Ed.), Instructional-design theories and models: New paradigms of instructional theory, Vol. II (pp. 183–213). Mahwah, NJ: Erlbaum.

Williams, S. M. (1992). Putting case-based instruction into context: Examples from legal and medical education. The Journal of the learning Sciences, 2(4), 367–427.